Google AI Tools (2026): The Complete Honest Guide

If you’ve been trying to make sense of Google AI tools in 2026, you’re not alone. Most people are already using them every single day — they just don’t realize it. Gmail finishing your sentence, Search answering before you finish typing, your phone camera identifying a plant you photographed on a walk. That’s all AI, quietly running underneath tools you’ve used for years.

None of it feels dramatic because it arrived gradually. No big launch moment. No notification saying “AI is now part of your life.” It just happened, feature by feature, update by update, until it became structural.

I’ve spent real time going through what Google has actually built — not just watching demo videos — and this is my honest take on what’s worth your attention, what’s still catching up, and where everything is heading. I have opinions. I’ll share them.

Why Google AI Tools Work Differently Than You Think

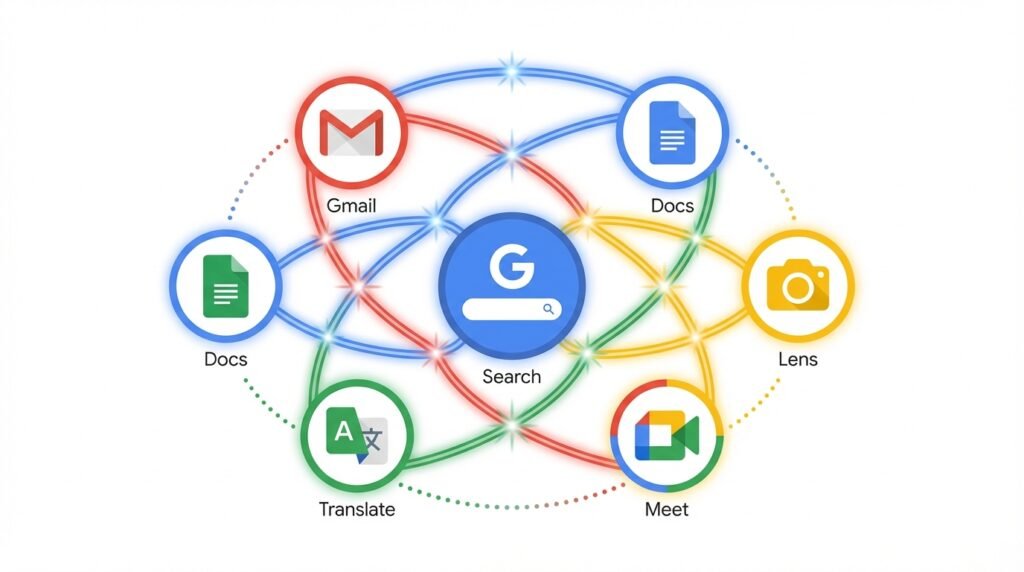

Most tech coverage treats Google’s AI like it’s one thing — the chatbot, the new search feature, whatever got announced at the latest conference. That framing misses what Google has actually spent the last decade building.

The intelligence isn’t layered on top of Google’s products like paint on a wall. It’s underneath them. Woven into Search, Gmail, Docs, Sheets, Translate, Lens, Meet, and Android at a structural level. Remove it and all of those products get noticeably worse, immediately. That’s how deep it goes.

Then there’s the developer side — which gets almost no mainstream coverage but might be the most important part of the whole story. Through Vertex AI and Google AI Studio, any developer anywhere can tap into the same underlying models powering Google’s own products. A solo freelancer and a global enterprise are drawing from the same well. That means Google’s AI ends up inside thousands of apps most people have never heard of. That’s the real reach of what’s been built here.

For a full overview of what Google is building, their official AI page is worth bookmarking.

Gemini: The Google AI Model Behind Most of It

Gemini is Google’s core model and, if you use any Google product regularly, you’ve almost certainly interacted with it already — just without a label on screen telling you so.

What makes it genuinely different from earlier systems is the multimodal design. Not a language model with image support bolted on after the fact. One unified system handling text, images, audio, video, and code simultaneously. Hand it a photograph and a written question at the same time — it processes both together, not one then the other.

That matters because real work rarely involves a single format. A doctor reviewing a scan alongside written notes. A designer reading client feedback while looking at a mockup. A student following a diagram and a written explanation side by side. Gemini is built for that kind of mixed, layered thinking — and it shows when you use the tools built on top of it.

Three Versions — More Different Than They Look

Google split Gemini into three tiers. The differences matter more than typical product versioning suggests.

Gemini Ultra is the top-end version. Maximum reasoning power, longest context windows, best performance on demanding tasks. Most people won’t touch it directly — it runs underneath enterprise and research tools where raw capability is the only thing that counts.

Gemini Pro is the workhorse. It powers most consumer-facing features — strong enough for real tasks, efficient enough to serve billions of users. If you’ve used AI suggestions in Gmail or Docs lately, this is almost certainly what was running.

Gemini Nano runs on-device, without sending data to a server. Faster, more private, works offline. I think this one gets undersold constantly. On-device AI isn’t a footnote — in a world where people are increasingly wary about what their phones are uploading, running things locally is a meaningful step.

Google AI Studio and Vertex AI: Built for Developers

Not building software? Skip ahead. If you are — or if you’re thinking about it — these two platforms are probably the most practically useful things in this entire guide.

Google AI Studio

AI Studio is the fast lane. Browser-based, nothing to install, no server to configure. You open it, start testing prompts with Gemini, watch what comes back, adjust, iterate. The gap between “I wonder if this would work” and a real testable prototype can be the same afternoon. I’ve watched developers do exactly that — it’s not an exaggeration.

When something works the way you want, you grab an API key and plug it into your application. That’s the whole early-stage flow. Anyone getting started with AI development should start here at AI Studio — it’s free and requires nothing to set up.

Vertex AI

Vertex is where things get serious. Google Cloud’s full enterprise AI platform — training, deployment, monitoring, version control, automated pipelines. Everything needed to keep AI running in production when real users depend on it.

The Model Garden is the feature I’d highlight first. Instead of building a model from scratch — expensive, slow, requires deep expertise — you start with a pre-built foundation model and customize it. That shift cuts months off typical timelines. Teams that would have spent six months building are shipping in six weeks.

The two platforms work together naturally. Prototype in AI Studio, scale in Vertex. It’s a sensible pipeline and more accessible than it used to be.

Google Workspace AI Features: Honest Assessment

I’ll say upfront — I was unconvinced about AI in productivity tools for a long time. Early versions felt like features built for demos, not for daily work. I’ve updated that view. These have gotten genuinely useful in ways I didn’t expect.

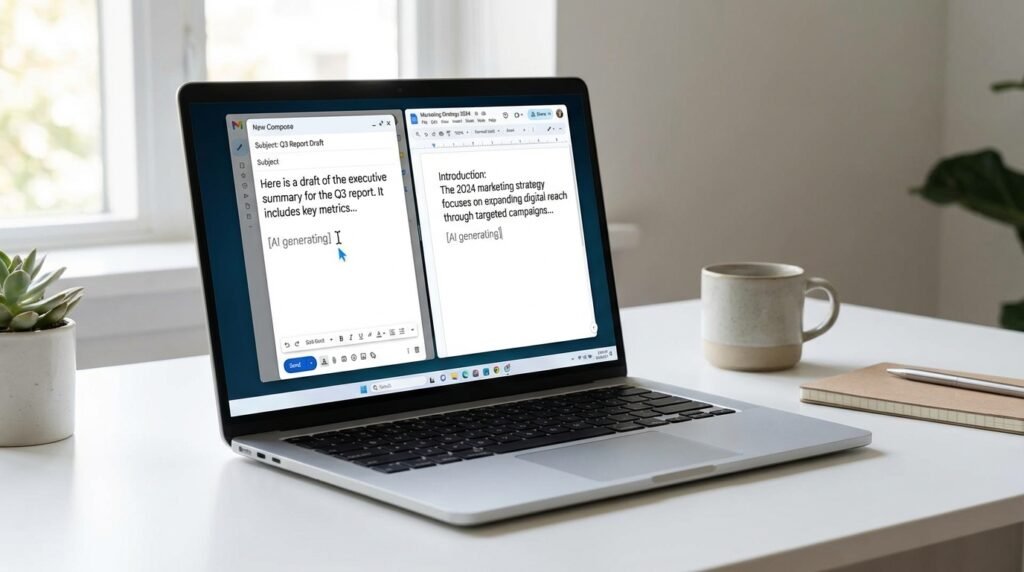

Gmail

Thread summarization is the one I actually use most. Drop into a long chain you haven’t touched in three days, get the essential points in under ten seconds. No reading through fifty messages. Full email drafting from a short prompt is also legitimately useful — describe what you want to say, get a complete draft, edit it to sound like you, send it. Smart Compose and Smart Reply are so baked in I barely notice them anymore, which is exactly how good ambient features should feel.

Google Docs

Most valuable at the start of a project. Blank page to working draft in sixty seconds. From there you edit, cut, restructure, improve. The hardest part of writing — getting something on the page to react to — is handled. Use the output as raw material, not a finished product, and it saves real time.

Google Sheets

Natural language queries in Sheets close a real gap for non-technical users. Type a plain English question about your data, get a chart or formula back. The people who benefit most are those who know what insight they’re after but don’t know how to write the formula to find it. That’s a lot of people — and this removes a genuine wall.

Google Meet

Auto transcription and post-meeting summaries. Decisions made, action items assigned, key threads captured — without anyone playing note-taker. If you’re in back-to-back meetings all day, this quietly becomes load-bearing. You notice when it’s missing.

What These AI Tools Did to Google Search

Search worked the same way from the late nineties until pretty recently. Keywords in, links out, you click around until you find what you need. That loop is breaking — and the change is real when you actually pay attention to it.

Natural language queries work properly now. You can search the way you actually think and talk. “What’s a good laptop for a design student on a tight budget” returns useful results. The system understands intent, not just isolated words. For anyone who used to spend time carefully rewording searches to get decent results — that’s a real quality of life improvement.

AI Overviews synthesize answers from multiple sources and surface them at the top. They got things embarrassingly wrong when they first launched — the examples that spread on social media were genuinely bad — and they’ve improved a lot since. For research queries they save real time. For anything accuracy-critical, I still check the sources underneath. Both things can be true.

Conversational search is the piece that changes the experience most. Follow-up questions work. Context carries between queries. You can drill progressively deeper into a topic without starting over every time. It feels less like querying a database and more like talking to someone who’s actually read everything.

Google Lens: Seriously Underused

Lens is the most underused tool in the whole lineup and I genuinely don’t know why. Point your camera at something — a plant, a building, a math problem on paper, text in a language you don’t speak — and it identifies it instantly.

Camera translation still surprises people the first time they see it. Point at a foreign-language menu or street sign and the translation appears overlaid directly on your screen. I used it throughout an entire trip across East Asia. Worked reliably enough that I barely needed anything else for reading signs and menus. Not perfect every single time. Good enough every single time.

NotebookLM: The Research Tool That Fixed the Trust Problem

AI research tools have a credibility problem that’s completely earned. Many hallucinate confidently — inventing citations, fabricating quotes, presenting wrong information with the same tone used for correct information. It’s made a lot of people reasonably skeptical of using AI for serious work.

NotebookLM is designed around solving exactly that. It doesn’t pull from broad training data. You upload your documents — PDFs, Google Docs, research papers, reports — and it answers based only on those. Nothing invented. Nothing from elsewhere on the internet. Every response traces directly back to something you gave it.

For anyone dealing with large document sets this changes the workflow completely. Upload a lengthy report and ask targeted questions. Upload competing research papers and ask where they agree or contradict each other. Upload project notes and ask for a summary of decisions made. It handles the reading. You handle the judgment. That’s the right division of labor.

The Audio Overview feature converts documents into a podcast-style conversation between two AI voices. I was dismissive until I tried it on a dense paper I’d been avoiding for weeks. Listened on a forty-minute walk, had a solid grasp of the main arguments by the time I got home. For people who absorb things better through listening, it’s more useful than it probably sounds.

You can try it free at notebooklm.google.com — no subscription needed to start.

Google Translate: Better Than Your 2014 Memory of It

A lot of people’s mental model of Translate is stuck about a decade behind what it actually is. The clunky word-by-word version that produced technically readable but obviously machine-generated text — that’s not what exists anymore.

Neural machine translation changed everything. The system processes full sentences in context, capturing grammar, tone, and meaning the way a human translator thinks about it — not converting words individually. For the language pairs most people actually need, results read like a person wrote them. Not always perfect. Consistently good enough to be genuinely useful.

Real-time conversation mode works in actual situations — two languages, one phone, both people talking naturally with a small but workable lag. Camera translation overlays the translated text directly on your screen. Non-negotiable travel feature for me at this point. Menus, signs, product labels, official documents. Point and read.

See the full range of supported languages on the Google Translate page.

Ethics and Safety: What Google Is Actually Doing

Skipping the part where I recite published principles at you. You can read those yourself. What matters more is what they actually do in practice.

Generated content is labeled visibly across products. When you’re reading a synthesized summary, there’s an indicator telling you that. Sounds basic. Isn’t universal. Matters more than it gets credit for.

The early AI Overview errors were real and some were genuinely embarrassing. Google fixed most of them quickly. The takeaway wasn’t that AI in search is wrong — it was that deploying AI into products used by billions carries real consequences when things go wrong, and speed of correction matters. They corrected fast.

DeepMind’s AlphaFold work deserves more than a bullet point. Predicting protein structures was a problem biology had been stuck on for fifty years. AlphaFold solved it. The downstream effects on drug discovery are still unfolding. That’s genuine scientific impact — not a product announcement dressed as research.

Google’s full ethical framework is published at Google AI Principles if you want to read it properly.

What’s Coming Next in Google AI

Everything above is the present. The present is genuinely impressive. What’s actively being built right now is a different level entirely.

Agentic AI: From Responding to Doing

Today’s systems respond. You ask, they answer. What’s coming is AI that acts — taking multi-step actions across multiple applications toward a goal you describe, not a command you typed.

Instead of asking it to draft one email, you describe an outcome: get the team aligned on the launch timeline by Friday. The system figures out the steps — draft the emails, schedule the meeting, update the doc, send the reminders — and executes them. Early prototypes are already running in controlled environments. The full version will change knowledge work more than anything since email. That’s not hyperbole.

Project Astra: Google’s Universal Assistant

Astra is the most ambitious thing Google is working on. A universal assistant that perceives the world continuously through cameras and microphones, holds context across days and weeks, and responds in real time with genuine awareness of its surroundings.

Whether it arrives on schedule is genuinely uncertain — big AI research projects frequently land differently than announced, and I’d be surprised if this one didn’t. But the direction it points, ambient persistent intelligence instead of session-based reactive prompting, is where the whole field is heading regardless.

Better Multimodal Capabilities

Near-term: richer multi-format inputs, video and spreadsheet and document analyzed together in one pass. Longer context so AI can hold continuity across a weeks-long project rather than a single session. These aren’t distant features — early versions are already appearing in current tools.

TPUs: The Hardware Making It All Possible

Google’s Tensor Processing Units are custom chips built specifically for AI. They never make headlines because hardware isn’t exciting. But they’re what makes running large models fast enough and cheap enough to work at global scale. Without continued TPU development, the pace of everything else slows considerably.

Final Thoughts on Google AI in 2026

Here’s what I keep coming back to: the integration is the story. Not any single feature or product. The fact that intelligence runs through the entire ecosystem — search to email to documents to camera to translation — at a scale reaching billions of people daily is what makes Google’s position genuinely hard to replicate.

Start somewhere useful right now: Workspace features if you live in Google’s ecosystem, Lens if you haven’t tried it properly, NotebookLM if you do any research or document-heavy work. These are ready. They work. They save real time on real tasks — not demo tasks.

For developers: the infrastructure to build serious AI-powered products has never been more accessible. If you’ve been waiting for the right moment, stop waiting.

For everyone else: the shift toward agentic, ambient AI isn’t a future scenario. It’s arriving gradually inside tools you already use, in ways most people won’t register until they suddenly can’t imagine working without them. That’s how the best technology always arrives.

Understanding what’s actually here — not the hype version, not the fear version, the real version — puts you in a better position to use it well. That’s always been true with technology. With this one, it’s especially true.

Leave a Reply